A new wave of AI “humanizer” tools, including platforms like Grubby AI, is intensifying debate around content authenticity and detection limits. As enterprises, educators, and publishers adopt AI at scale, the ability to disguise machine-generated text is raising concerns over trust, transparency, and regulatory oversight across global digital ecosystems.

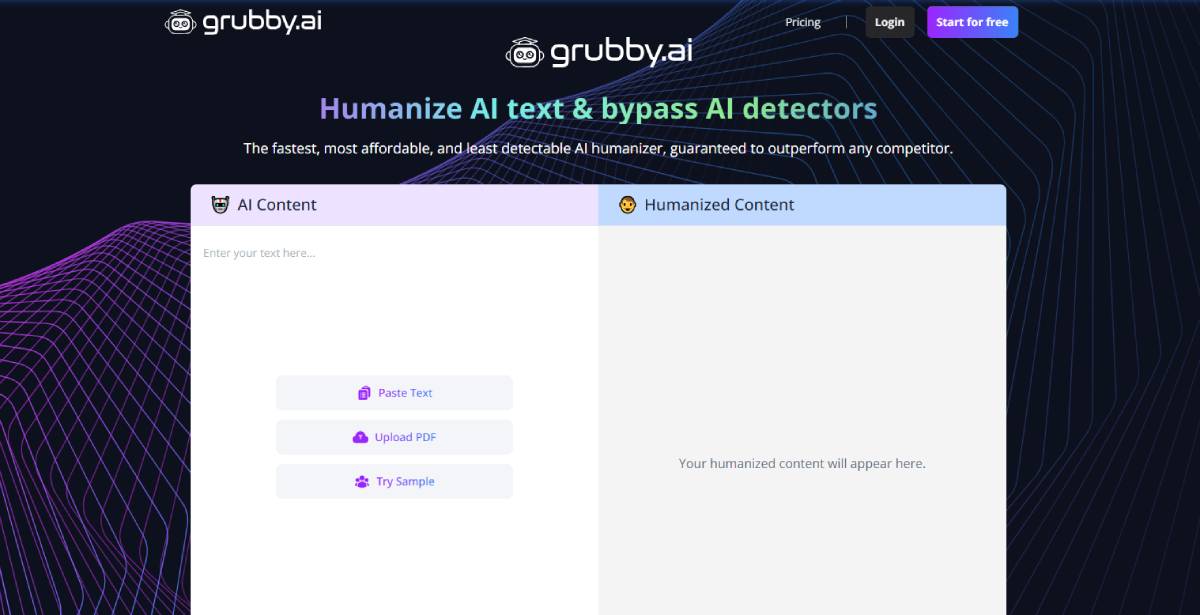

Grubby AI positions itself as an undetectable AI humanizer, designed to transform machine-generated text into outputs that evade AI detection systems. The tool reflects a broader industry shift where generative AI is no longer only about content creation but also content obfuscation.

The development comes amid rising deployment of AI detection tools across academia, recruitment, and publishing workflows. Companies are increasingly using automated screening to identify synthetic text, while parallel tools attempt to bypass these systems. This creates a competitive cycle between generation and detection technologies.

The trend is drawing attention from policymakers and enterprise risk teams focused on misinformation, compliance, and content provenance. The rise of AI humanization tools sits within a broader acceleration of generative AI adoption across industries, including marketing, software development, and customer communication. As models become more capable, distinguishing human-written content from AI-generated text has become increasingly difficult, prompting a parallel market for detection solutions.

Historically, similar “arms races” have emerged in cybersecurity, where encryption and intrusion detection evolve together. In the AI context, however, the stakes extend into education integrity, corporate governance, and media trust. Institutions are now grappling with whether AI-generated content should be labeled, restricted, or fully integrated into workflows.

The expansion of tools like Grubby AI reflects a transition phase in AI governance, where technological capability is outpacing standardized rules and enforcement mechanisms. Industry analysts note that AI humanization tools highlight a structural gap in current AI governance frameworks. While enterprises are rapidly adopting generative AI for productivity gains, verification systems remain inconsistent across platforms and jurisdictions.

Some AI researchers argue that detection-based approaches may become fundamentally unreliable as language models improve, suggesting that provenance tracking and watermarking could become more viable long-term solutions.

Legal and compliance experts point out that organizations may face increased exposure if AI-generated content is passed off as human-authored in regulated environments such as finance, healthcare, or education. Meanwhile, digital ethics commentators warn that normalization of undetectable AI text could erode trust in online information ecosystems unless transparency standards evolve in parallel.

For businesses, the emergence of AI humanization tools introduces both operational flexibility and reputational risk. Marketing, content production, and customer support functions may benefit from higher output efficiency, but verification challenges could complicate compliance and brand integrity.

For policymakers and regulators, the trend raises urgent questions around disclosure requirements, AI labeling standards, and enforcement mechanisms for synthetic content. Educational institutions and hiring systems may also need to revise evaluation frameworks to account for indistinguishable machine-generated submissions.

Investors in AI infrastructure and SaaS platforms are increasingly evaluating not just generative capability, but trust, traceability, and governance features as core value drivers. The competition between AI generation and detection technologies is expected to intensify as models become more sophisticated. Future regulatory frameworks may shift toward mandatory content provenance systems rather than detection-only strategies. Enterprises will likely prioritize AI tools that balance productivity with auditability. The next phase of development will be defined less by what AI can generate, and more by how transparently it can be verified.

Source: Grubby AI

Date: April 16, 2026