A major development unfolded in law enforcement reporting as officials in Richland raised alarms over a rise in AI-enabled crimes targeting children. The trend underscores how generative AI tools are being misused for exploitation, intensifying concerns for public safety, digital regulation, and platform accountability across global technology ecosystems.

Richland police reported a growing number of incidents involving AI-assisted criminal activity targeting minors, highlighting the misuse of generative tools for harmful content creation and online exploitation. Authorities indicate that these cases are increasingly difficult to trace due to anonymized platforms and synthetic media generation.

Law enforcement agencies are coordinating with cybersecurity specialists to improve detection mechanisms and reporting frameworks. The issue is gaining attention as AI tools become more accessible, lowering technical barriers for malicious actors. Officials stress that prevention, detection, and cross-platform cooperation are now critical priorities in addressing this emerging category of digital crime.

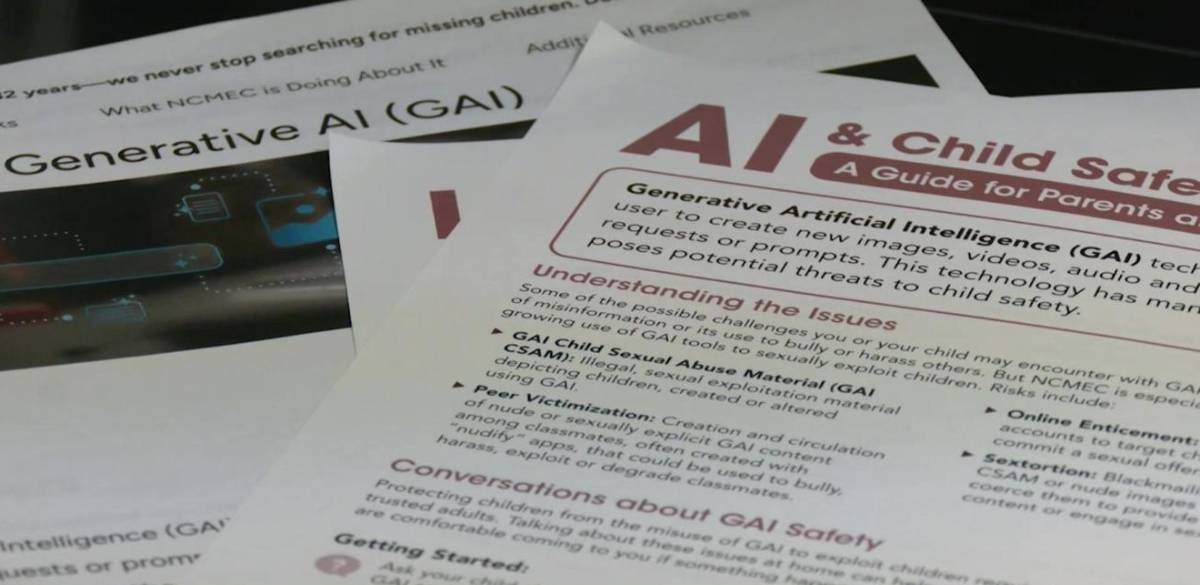

The rise of generative AI has introduced new challenges for digital safety frameworks worldwide. Tools capable of producing highly realistic images, text, and audio have expanded creative and commercial applications, but they have also created new vectors for abuse.

Child protection agencies and cybersecurity experts have warned that synthetic media can be weaponized to create exploitative content or facilitate grooming behaviors online. Historically, online child exploitation has evolved alongside technology from early internet forums to encrypted messaging platforms—and AI represents the latest escalation in that trajectory.

Regulators in multiple jurisdictions are now debating how to classify and control AI-generated harmful content, particularly as existing legal frameworks were not designed to address synthetic media at scale.

Cybersecurity analysts emphasize that AI lowers the barrier to entry for producing harmful content, increasing both volume and sophistication of potential threats. Experts note that detection systems must now evolve to identify synthetic patterns rather than relying solely on traditional digital forensics.

Child safety advocates argue that platform accountability needs to increase, particularly for companies deploying generative AI tools without robust safeguards. Law enforcement officials highlight the importance of public awareness, reporting mechanisms, and collaboration with technology providers to track abuse networks.

Policy specialists also warn that fragmented regulation could hinder enforcement efforts, calling for coordinated international frameworks to address AI-driven exploitation crimes more effectively.

For technology companies, the issue raises urgent questions around safety-by-design principles in AI systems, including content filtering, watermarking, and abuse detection mechanisms. Firms may face increased regulatory scrutiny as governments move to tighten controls on generative tools.

For investors, rising legal and reputational risks associated with unsafe AI deployments could influence valuation of platforms lacking strong governance frameworks.

For policymakers, the trend underscores the need for updated child protection laws that explicitly account for AI-generated content. Cross-border enforcement cooperation will be essential, as digital crimes increasingly transcend jurisdictional boundaries.

Authorities are expected to expand monitoring and invest in AI-driven detection systems to counter misuse of generative technologies. Future regulatory actions may include stricter compliance requirements for AI developers and platform operators. The key uncertainty lies in balancing innovation with safeguards, as rapid AI adoption continues to outpace legal and enforcement capabilities. The issue is likely to remain a central focus in global AI governance discussions.

Source: NBC Right Now

Date: April 16, 2026