A major development unfolded as Nvidia introduced an AI-driven texture compression technology capable of reducing GPU memory usage by up to 85% without compromising visual quality. The breakthrough signals a potential shift in gaming, graphics, and AI infrastructure efficiency, with far-reaching implications for developers, hardware manufacturers, and cloud computing providers.

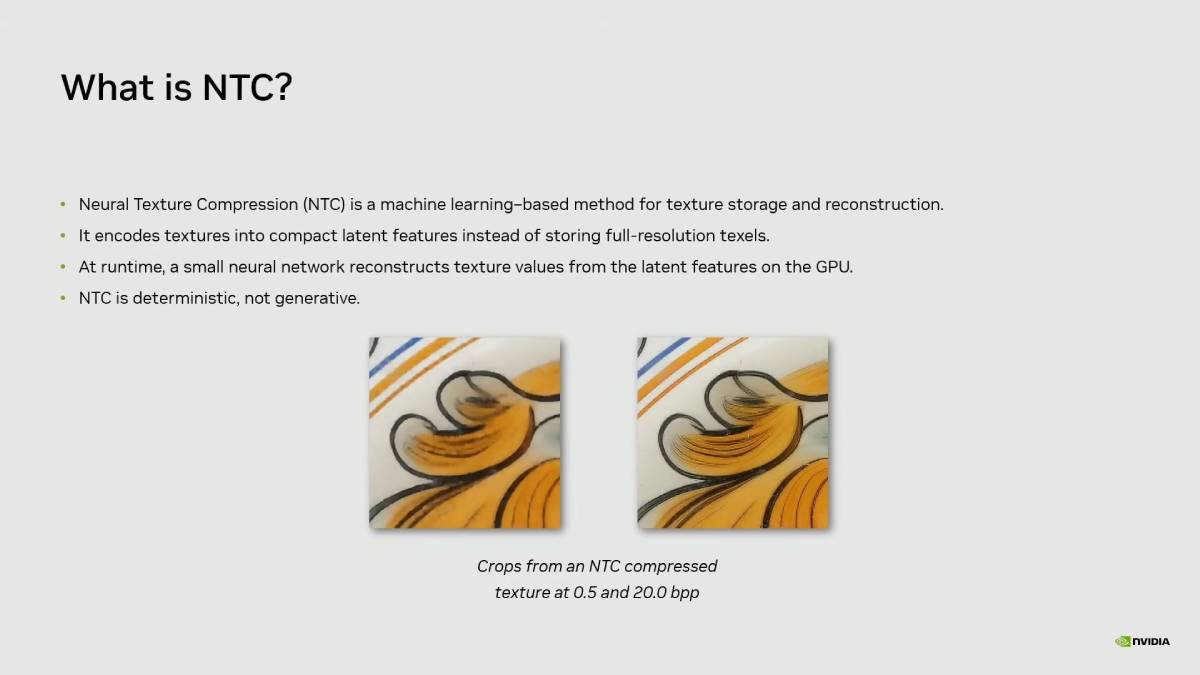

Nvidia’s new “Neural Texture Compression” technology leverages AI models to dramatically reduce VRAM consumption in graphics rendering. Demonstrations showed that textures requiring 6.5GB of memory could be compressed to approximately 970MB while maintaining near-identical visual fidelity.

The innovation is positioned for integration into next-generation GPUs and game engines, potentially transforming how assets are stored and processed. Nvidia showcased the technology in controlled demos, emphasizing zero perceptible loss in quality.

The move comes amid increasing demand for high-performance graphics and AI workloads, where memory bandwidth and efficiency remain critical constraints across gaming, enterprise AI, and cloud-based rendering environments.

The development aligns with a broader trend across global markets where AI is increasingly used to optimize hardware performance rather than just software capabilities. As gaming graphics become more complex with higher resolutions, ray tracing, and real-time rendering VRAM limitations have emerged as a bottleneck.

Historically, improvements in GPU performance relied heavily on hardware upgrades, increasing costs for both consumers and enterprises. However, AI-based optimization techniques, such as upscaling and compression, are redefining this paradigm.

Nvidia has previously led innovations like DLSS (Deep Learning Super Sampling), which used AI to enhance image quality while reducing computational load. Neural Texture Compression represents a natural extension of this strategy.

The technology also intersects with broader AI infrastructure demands, where memory efficiency is crucial for scaling data centers, gaming platforms, and edge computing environments in a cost-effective manner.

Industry analysts view Nvidia’s latest innovation as a strategic move to reinforce its dominance in both gaming and AI hardware ecosystems. Experts suggest that reducing memory requirements without sacrificing quality could significantly extend the lifecycle of existing GPUs, lowering barriers for developers and users.

While Nvidia has not disclosed full commercialization timelines, internal demonstrations highlight confidence in the technology’s maturity. Analysts also point out that such advancements could influence competitive dynamics, pressuring rivals to accelerate similar AI-driven optimizations.

From a technical perspective, experts emphasize that neural compression could redefine asset pipelines, allowing studios to create richer environments without being constrained by hardware limitations. The development also underscores Nvidia’s broader strategy of embedding AI deeply into hardware performance optimization, rather than treating it solely as a separate computational workload.

For global executives, this breakthrough could reshape cost structures across gaming, cloud computing, and AI infrastructure. Reduced memory requirements translate into lower hardware costs, improved scalability, and enhanced performance efficiency.

Game developers may gain the ability to deliver higher-quality experiences without requiring users to upgrade hardware frequently, potentially expanding market reach. Cloud providers could also benefit from reduced resource consumption, improving margins.

From a policy standpoint, improved efficiency aligns with sustainability goals by reducing energy consumption associated with high-memory workloads. However, it may also intensify competition in the GPU market, prompting regulatory scrutiny in regions monitoring tech dominance.

Looking ahead, the key question is how quickly Neural Texture Compression will be adopted across gaming engines and enterprise platforms. Industry observers will watch for integration into upcoming GPU releases and developer tools.

If successfully scaled, the technology could redefine performance benchmarks in graphics computing. However, real-world deployment, compatibility, and developer adoption will determine its long-term impact on the industry.

Source: Tom’s Hardware

Date: April 5, 2026