The growing challenge of distinguishing humans from machines is intensifying as AI-generated interactions become increasingly convincing across digital platforms. The debate around “verified human” systems reflects a broader shift in online authentication, with implications for cybersecurity, digital trust frameworks, and global platform governance as artificial intelligence blurs identity boundaries.

The discussion centers on evolving verification systems designed to confirm whether online users are human, as AI-generated responses become harder to detect. The paradox where even human confirmation responses can mimic AI behavior highlights systemic vulnerabilities in digital authentication.

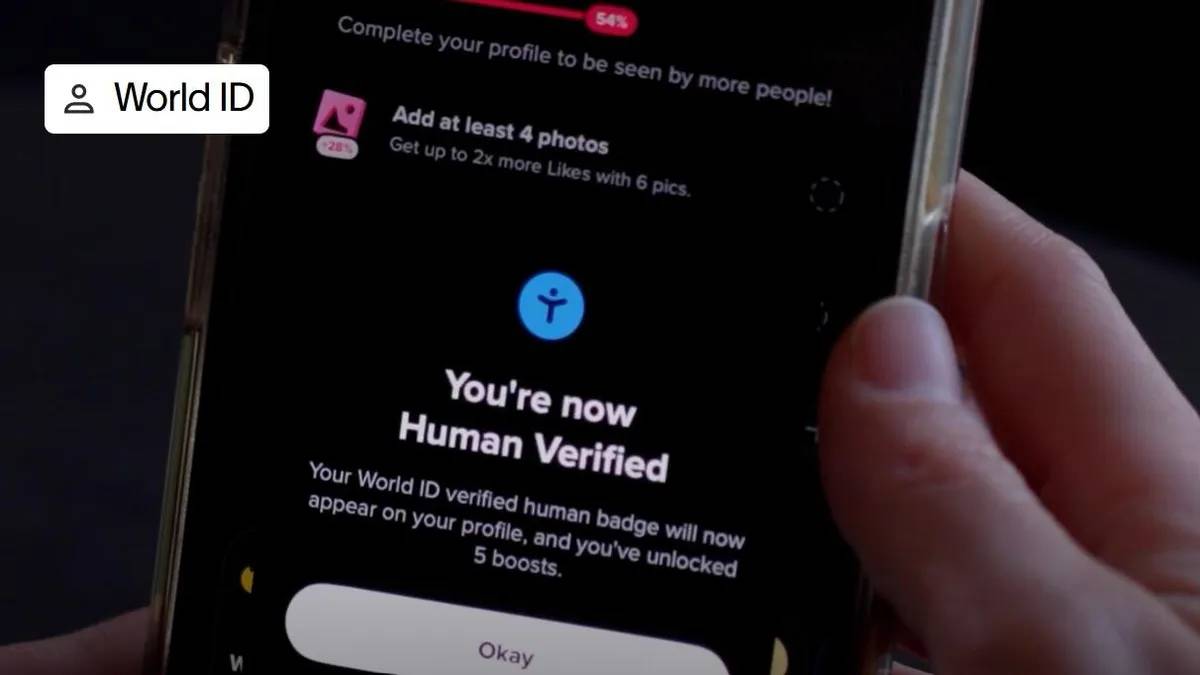

Tech platforms are experimenting with advanced verification layers, including behavioral analysis and biometric signals, to strengthen identity assurance. CNET reports highlight growing concern over how conversational AI systems can replicate human-like interaction patterns, complicating traditional CAPTCHA-style defenses.

The issue is becoming central to digital trust infrastructure across platforms, especially as generative AI tools scale globally. The development aligns with a broader trend across global digital ecosystems where AI-generated content is increasingly indistinguishable from human communication. As generative systems evolve, traditional verification tools are proving insufficient to maintain trust and security online.

Historically, platforms relied on simple tests such as image recognition or behavioral checks to distinguish bots from humans. However, the rise of advanced large language models has disrupted these mechanisms, forcing a shift toward more complex AI-driven authentication frameworks.

This evolution is occurring alongside rapid expansion of AI platforms and AI frameworks across industries, where identity verification is becoming a foundational layer of digital infrastructure. Governments and technology firms are now exploring scalable solutions to preserve trust in online ecosystems.

Cybersecurity analysts suggest that the line between human and AI-generated interaction is rapidly narrowing, creating a structural challenge for digital identity systems. Experts note that adversarial AI models can now simulate human-like responses in real time, weakening traditional verification methods.

Industry observers highlight that future authentication systems may rely heavily on adaptive AI frameworks capable of continuously learning user behavior patterns. This shift is expected to move beyond static verification toward dynamic identity modeling.

Digital trust specialists emphasize that the rise of conversational AI platforms is forcing a redesign of cybersecurity architecture, with identity verification becoming a continuous rather than one-time process across digital platforms.

For global executives, the shift underscores the growing importance of robust AI-driven identity verification systems as part of enterprise cybersecurity strategy. Businesses operating digital platforms may need to invest in advanced authentication infrastructure to maintain user trust.

Investors are likely to view cybersecurity and identity verification technologies as critical growth sectors within the broader AI ecosystem. However, implementation complexity and privacy concerns may influence adoption timelines.

From a policy perspective, regulators may increasingly focus on establishing global standards for AI-generated content labeling, identity verification, and platform accountability in digital communication environments.

Looking ahead, the evolution of AI-driven identity verification will likely accelerate as generative models become more sophisticated. Stakeholders should watch for integration of real-time behavioral analytics and biometric authentication within major platforms.

As AI systems continue to mimic human interaction more convincingly, the boundary between user and machine will become a central governance challenge for digital ecosystems worldwide.

Source: CNET

Date: April 2026