A major development is unfolding as A2E introduces a platform offering “uncensored” AI-generated video capabilities, signaling a disruptive shift in content creation. The move highlights growing tensions between innovation and regulation, with implications for media industries, platform governance, and global digital policy frameworks.

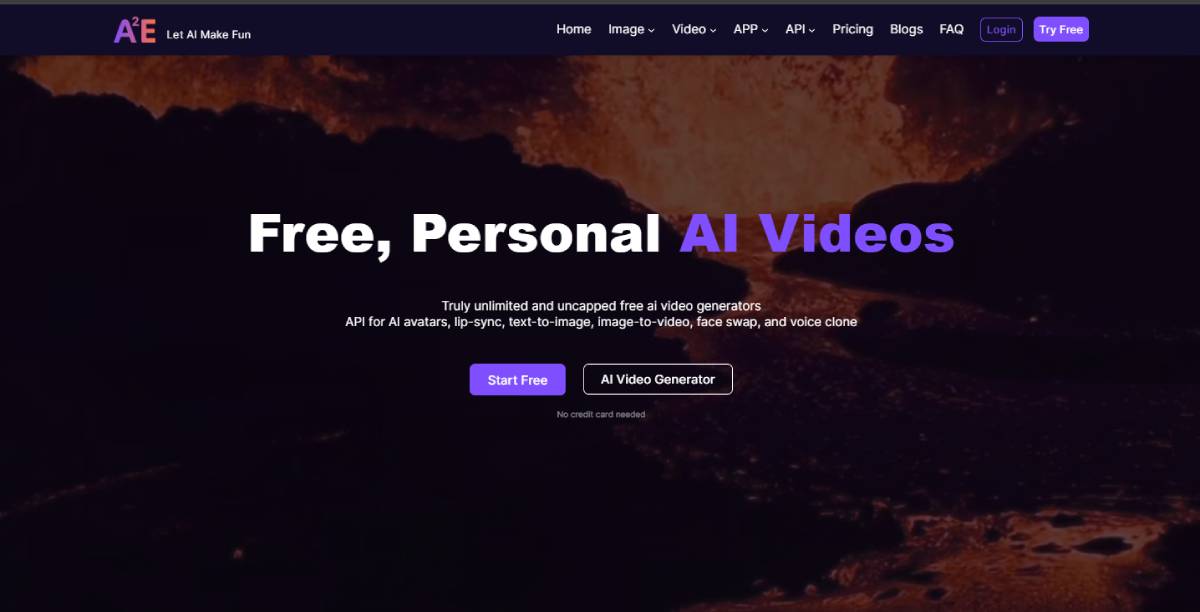

A2E has launched an AI video generation platform that emphasizes minimal content restrictions, enabling users to create a wide range of synthetic videos. The platform positions itself as an open alternative to more tightly regulated AI tools, which often impose strict moderation policies.

The offering leverages generative AI models capable of producing video content from text prompts, reflecting rapid advancements in multimodal AI technologies. Its “uncensored” positioning differentiates it in a crowded market but also raises questions around misuse, compliance, and ethical boundaries.

The launch comes amid increasing global scrutiny of AI-generated media, particularly concerning misinformation, intellectual property, and content safety. The emergence of platforms like A2E aligns with a broader trend in generative AI, where tools are becoming more powerful, accessible, and capable of producing highly realistic media. Over the past two years, AI video generation has evolved rapidly, driven by advancements in deep learning and increased availability of compute resources.

However, this progress has been accompanied by growing concerns over content governance. Major technology companies have implemented safeguards to limit harmful or misleading outputs, particularly in areas such as deepfakes and sensitive content.

The concept of “uncensored” AI tools introduces a new dynamic, potentially challenging existing regulatory frameworks. Governments worldwide are actively exploring policies to address the risks associated with synthetic media, including misinformation, privacy violations, and societal impact, making this a critical moment for the industry.

Industry analysts view the launch of A2E as indicative of a broader divergence in AI development philosophies between open, unrestricted systems and tightly governed platforms. Experts argue that while fewer restrictions can foster innovation and creative freedom, they also increase the risk of misuse.

Technology policy specialists emphasize that the absence of strong guardrails could expose platforms to regulatory intervention, particularly in regions with strict digital content laws. At the same time, some developers advocate for open access, suggesting that innovation thrives in less constrained environments.

Market observers note that user demand for flexible AI tools is growing, but trust and safety considerations will ultimately shape long-term adoption. The balance between openness and responsibility is expected to remain a central debate in the AI ecosystem.

For businesses, platforms like A2E present both opportunities and risks. Companies may leverage such tools for rapid content creation, marketing, and creative experimentation, but must carefully manage brand safety and legal exposure.

For investors, the rise of less-restricted AI platforms signals a high-growth but high-risk segment within the generative AI market. From a policy perspective, the development intensifies the need for clear regulatory frameworks governing AI-generated content. Governments may accelerate efforts to enforce transparency, accountability, and safeguards to prevent misuse while supporting innovation.

The trajectory of platforms like A2E will depend on how regulators and markets respond to the trade-offs between openness and control. Increased scrutiny and potential regulation are likely, particularly in sensitive content domains. Decision-makers should monitor evolving policies and platform practices closely, as the governance of AI-generated media becomes a defining issue in the digital economy.

Source: A2E

Date: April 10, 2026