A significant disclosure in the AI industry has raised questions around transparency and intellectual ownership, as Cursor confirmed its coding model was built on technology from Moonshot AI. The revelation highlights growing scrutiny over AI development practices, with implications for global competition, trust, and regulatory oversight.

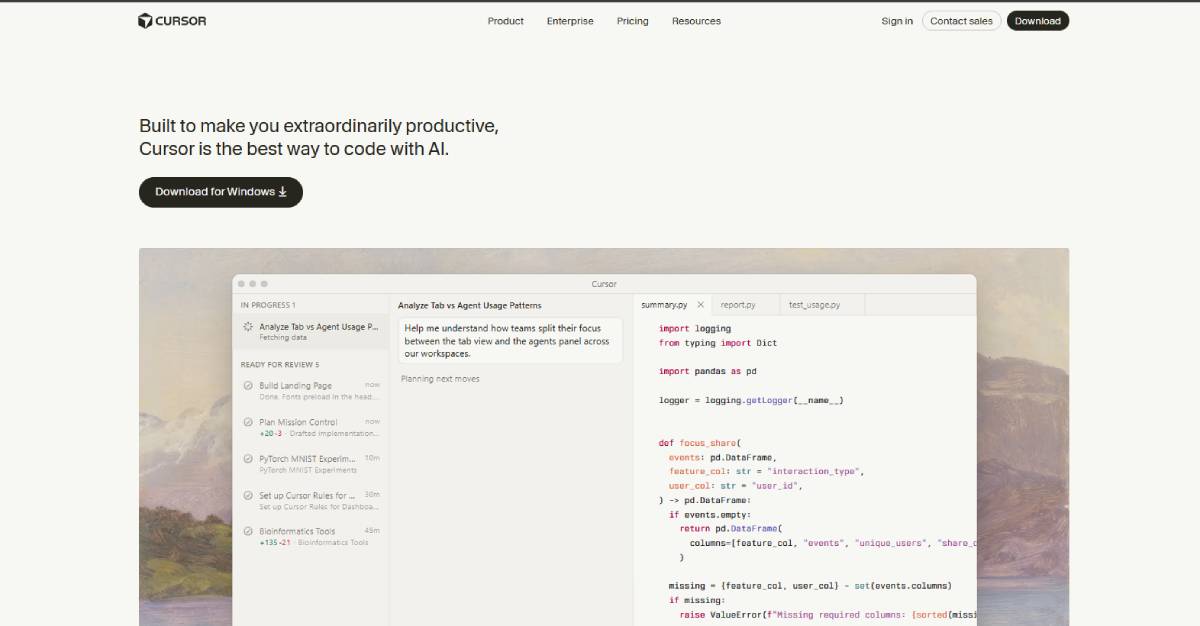

Cursor, an AI-powered coding assistant platform, acknowledged that its latest model is built on top of Kimi, developed by Moonshot AI. The admission follows industry speculation regarding the origins of the model’s capabilities.

The disclosure places Cursor among a growing number of firms leveraging external AI models to accelerate development. Stakeholders include developers, enterprise users, investors, and regulators assessing transparency and compliance.

The case also introduces a geopolitical dimension, as reliance on Chinese-developed AI technology may attract scrutiny in Western markets. It underscores the interconnected nature of global AI innovation, where collaboration and dependency often intersect with competitive and regulatory concerns.

The development aligns with a broader trend across global AI markets where companies increasingly build on existing foundation models rather than developing systems entirely in-house. This approach accelerates innovation but raises questions around intellectual property, attribution, and competitive differentiation.

In recent years, the AI ecosystem has become highly interconnected, with models, datasets, and tools often crossing organizational and national boundaries. At the same time, geopolitical tensions particularly between the U.S. and China have intensified scrutiny over technology dependencies.

Transparency in AI development has become a critical issue, as enterprises and regulators demand clarity on how models are built, trained, and deployed. Cases like this highlight the need for clearer standards around disclosure and accountability, especially as AI systems become integral to business operations and decision-making.

Industry analysts note that Cursor’s admission reflects a broader reality in AI development, where leveraging existing models is both common and commercially efficient. Experts suggest that the key issue is not the use of external technology, but the level of transparency provided to users and stakeholders.

Some analysts warn that reliance on third-party models especially from different geopolitical jurisdictions can introduce risks related to data security, compliance, and long-term strategic control.

Others argue that such collaborations demonstrate the global nature of AI innovation, where breakthroughs are often built on shared advancements. Industry leaders emphasize the importance of clear communication, robust licensing agreements, and compliance frameworks to maintain trust and credibility in AI markets.

For global executives, the development underscores the importance of transparency in AI sourcing and development. Companies may need to disclose dependencies on external models more clearly to maintain trust with customers and regulators.

Investors could place greater emphasis on originality, control, and risk exposure when evaluating AI firms. From a policy perspective, governments may introduce stricter disclosure requirements and guidelines סביב cross-border AI collaboration. The case could also influence procurement decisions, particularly in sensitive sectors where the origin of AI technology is a critical factor.

Looking ahead, the AI industry is likely to face increased pressure to standardize transparency and disclosure practices. Companies will need to balance speed of innovation with accountability and trust.

As global competition intensifies, the origin and architecture of AI models will become key differentiators. For decision-makers, understanding these dynamics will be essential in navigating the evolving AI landscape.

Source: TechCrunch

Date: March 22, 2026