A major development unfolded as researchers unveiled a new tool designed to make AI’s contribution to student writing visible, signaling a strategic shift toward transparency in AI platforms and AI frameworks used in education. The innovation carries significant implications for academic integrity, edtech markets, and policy frameworks governing AI-assisted learning.

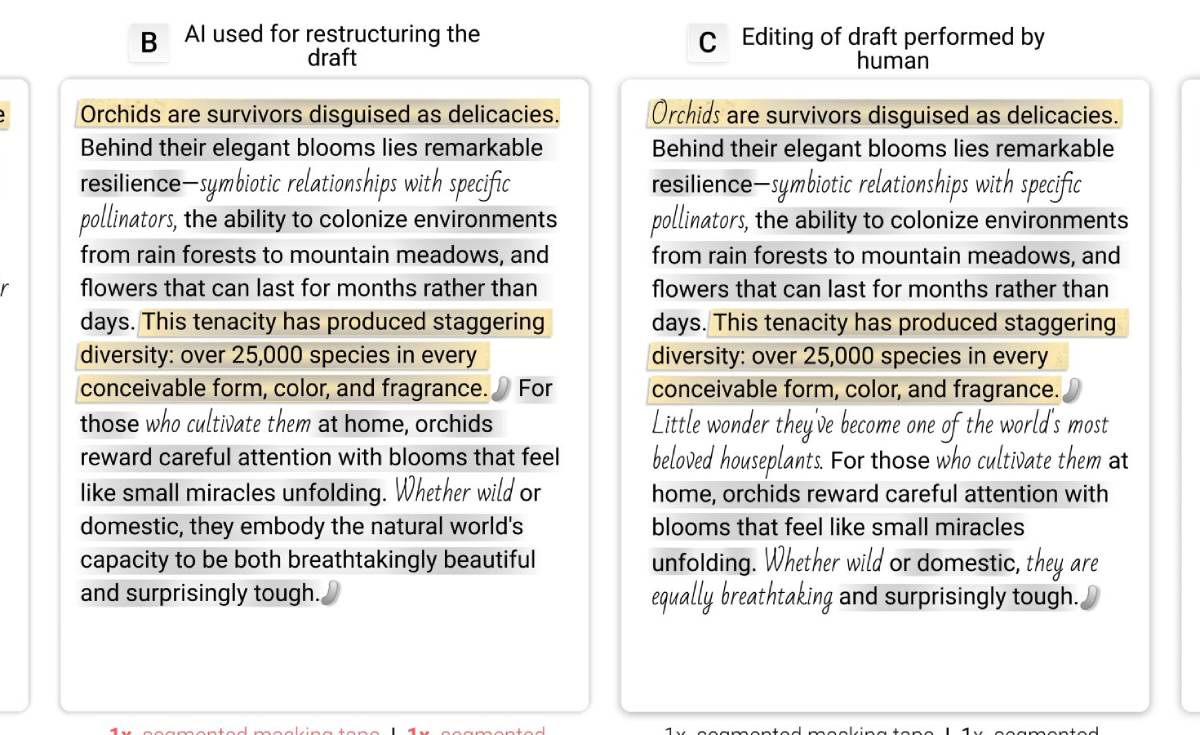

A newly developed tool enables educators to identify and visualize how AI platforms contribute to student-written content. The system tracks AI-assisted inputs, edits, and suggestions, offering a clearer breakdown of human versus machine-generated contributions. It is designed to integrate with existing educational workflows, supporting teachers in evaluating originality and effort.

Developed amid growing reliance on generative AI tools in classrooms, the technology addresses concerns around plagiarism and undisclosed AI assistance. The initiative reflects broader efforts to create accountability within AI frameworks, ensuring that students and institutions maintain transparency while still leveraging AI-driven productivity tools.

The development aligns with a broader trend across global education systems where AI adoption is accelerating faster than institutional safeguards. Generative AI platforms have become widely used by students for drafting essays, solving problems, and enhancing writing quality.

However, this rapid integration has created challenges for educators attempting to distinguish between original student work and AI-assisted outputs. Traditional plagiarism detection tools are often ineffective against AI-generated text, prompting demand for more advanced solutions.

At the same time, universities and schools worldwide are revising academic integrity policies to address AI usage. Some institutions have embraced AI as a learning aid, while others have imposed strict limitations.

This evolving landscape underscores the need for AI frameworks that prioritize transparency, enabling responsible use without undermining educational outcomes or assessment credibility.

Education experts argue that tools like this represent a critical step toward balancing innovation with accountability. Analysts note that AI platforms are unlikely to be removed from classrooms; instead, the focus is shifting toward managing their use effectively.

Academic leaders emphasize that transparency not prohibition is the sustainable path forward. By making AI contributions visible, educators can better assess student understanding and engagement.

Technology experts also highlight that such tools could set new standards for explainability within AI frameworks, extending beyond education into enterprise and regulatory contexts. However, some critics caution that over-reliance on monitoring tools could create privacy concerns or discourage creative use of AI.

Overall, the consensus suggests that visibility into AI usage will become a cornerstone of trust in digital learning environments. For edtech companies, the shift could redefine product development strategies, with increased emphasis on transparency features within AI platforms. Vendors may need to integrate tracking and explainability capabilities to remain competitive.

Investors could view this as an emerging segment within the AI market—tools that audit and monitor AI usage. Educational institutions will need to update policies, balancing enforcement with flexibility to encourage responsible AI use.

For policymakers, the development raises broader questions about disclosure requirements for AI-generated content across sectors. Regulations may evolve to mandate transparency standards, influencing how AI frameworks are deployed in education and beyond.

Looking ahead, transparency tools are likely to become standard components of AI platforms used in education. Decision-makers should monitor adoption rates and how institutions integrate these systems into assessment models.

As AI frameworks continue to evolve, the ability to track and explain machine contributions will be critical to maintaining trust. The next phase of AI in education will hinge not just on capability, but on accountability.

Source: Phys.org

Date: April 2026