A major development unfolded as the FDA accelerates the migration of its AI platform Elsa from Anthropic’s Claude to alternative models such as Google’s Gemini. The transition has raised urgent technical compliance and regulatory risk concerns for clinical trial sponsors, affecting document review integrity, submission processes, and operational strategies. This shift signals a broader evolution in AI governance across life sciences.

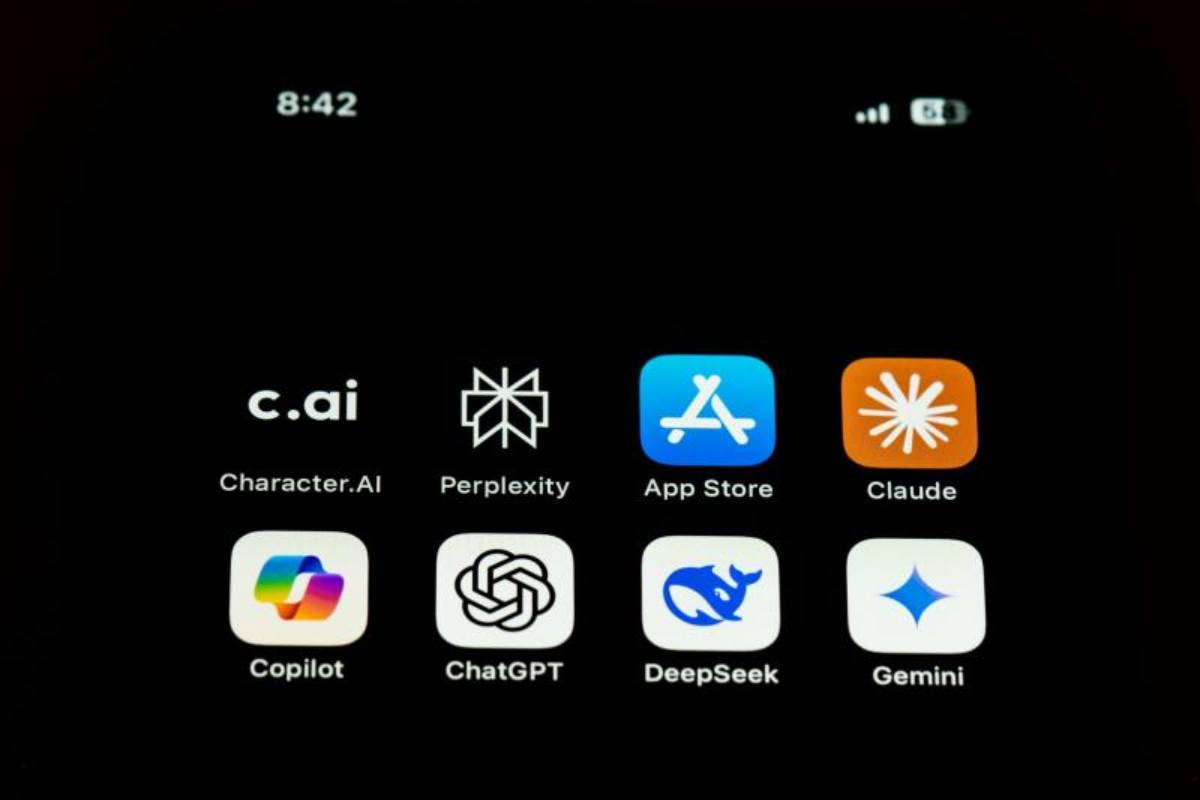

Elsa, the FDA’s internal AI tool used to assist in reviewing clinical trial documents, protocols, and regulatory submissions, is undergoing a rapid model migration following federal directives restricting the use of Claude. The agency is transitioning to Google’s Gemini and other alternative platforms without completing extensive validation, heightening risks for accuracy, consistency, and technical compliance.

Sponsors are directly impacted, as Elsa’s outputs influence regulatory decision-making and documentation standards. The accelerated migration introduces operational and data governance challenges, requiring sponsors to closely monitor AI-assisted outputs, adapt submission strategies, and manage potential compliance gaps while maintaining alignment with evolving regulatory expectations.

The Elsa migration reflects the increasing reliance of regulators on AI tools to streamline complex document analysis, improve efficiency, and maintain consistency across high-volume submissions. Generative AI platforms like Elsa have become integral in reviewing protocols, summarizing adverse events, and synthesizing scientific data, but these tools require robust validation to ensure reliability and compliance. The sudden migration from Claude to Gemini represents an unprecedented challenge, as AI outputs may vary between models, potentially affecting regulatory review consistency.

Sponsors must now navigate this evolving landscape, balancing the efficiency benefits of AI with the need for defensible, transparent documentation. Historically, fragmented AI governance and limited guidance have slowed adoption in life sciences, but Elsa’s migration underscores the necessity for structured oversight, risk management protocols, and proactive engagement between sponsors and regulators to ensure compliance and operational continuity.

Industry analysts acknowledge that AI integration offers significant efficiency gains but caution that rapid model transitions can introduce technical, regulatory, and legal risks. Experts highlight that discrepancies between outputs generated by different AI models could compromise administrative records and trigger regulatory queries or compliance challenges. Governance specialists advise sponsors to maintain detailed audit trails, document AI-assisted outputs, and establish validation protocols to safeguard data integrity.

Compliance officers emphasize that proactive monitoring and internal alignment with regulatory expectations are essential to mitigate risk. Analysts also note that the Elsa migration underscores broader considerations for AI governance, transparency, and documentation standards in regulated industries, signaling a potential shift in how regulatory agencies evaluate AI-assisted processes and manage risk across high-stakes scientific review environments.

For global life sciences sponsors, the Elsa migration may necessitate revising submission strategies, strengthening compliance frameworks, and implementing enhanced documentation practices. Investors should anticipate potential delays or additional review queries arising from model transitions. Companies that fail to track AI-assisted analyses risk operational, regulatory, and legal exposure, while proactive organizations can leverage structured validation and documentation to secure a competitive advantage. At the policy level, Elsa’s migration highlights the need for clearer regulatory guidance on AI model transitions, validation requirements, and data governance. Executives and regulatory strategists must prioritize risk mitigation, consistent oversight, and stakeholder engagement to navigate evolving AI-assisted review processes effectively.

Looking ahead, sponsors should expect continued regulatory attention on AI-assisted review, with guidance likely on model validation, output documentation, and data integrity standards. Decision-makers must focus on maintaining consistent audit trails, validating outputs across multiple models, and engaging with regulators to clarify expectations. While uncertainties remain regarding operational impact and compliance, organizations that proactively address technical and regulatory risks will be best positioned to leverage AI in high-stakes life sciences workflows effectively.

Source: Clinical Leader, Elsa’s AI Model Migration: Technical, Compliance, and Regulatory Risks for Sponsors

Date: 26 March 2026